El parque marino Nazca-Desventuradas abarcará una superficie de 297.000 km2, convirtiéndose en el más grande de Latinoamérica. Por Lorena Guzmán H. 4 de noviembre de 2015 Para 2020, el 10% de los mares del mundo deberían estar bajo protección o por lo menos esa es la meta de las Naciones Unidas. No obstante, actualmente las zonas con algún grado de control solo llegan al 3,5%, mientras que las que se encuentran con protección total apenas suman el 1.6%. Aún así, cada avance cuenta y Chile acaba de dar uno que podría inspirar a otros. Durante la segunda reunión internacional Nuestro Océano –realizada en el puerto chileno de Valparaíso a principios de octubre pasado– la presidenta de Chile, Michelle Bachelet, no solo inauguró la cita, sino que también anunció tres nuevas zonas de protección marinas en territorio chileno. Estas “conllevarán la protección de una superficie total de más de un millón de kilómetros cuadrados, constituyéndose en su conjunto en uno de los espacios de protección marina más grandes del mundo”, aseguró la mandataria. El parque marino Nazca-Desventuradas abarcará una superficie de 297.000 km2, convirtiéndose en el más grande de Latinoamérica; a él se suma una red de áreas protegidas en el archipiélago Juan Fernández que mantendrá bajo protección a 13.000 km2. Por último, está la propuesta de crear una inmensa zona protegida de unos 720.000 km2 en Rapa Nui, (o Isla de Pascua), que de ser aprobada por la comunidad local pasaría a ser la más grande del continente americano y una de las tres mayores del mundo, superando incluso al parque marino Nazca-Desventuradas El parque marino en la Isla de Pascua se sumaría a un área protegida ya establecida de 150.000 km2: el Parque Marino Motu Motiro Hiva, que incluye la isla Salas y Gómez. No obstante, en la creación de esta en 2010 no se involucró a los locales, lo que ha traído varios conflictos que interfieren en su funcionamiento, más aún considerando que según el Convenio 169 del Organismo Internacional de Trabajo (OIT) sobre pueblos indígenas y tribales, se debe hacer una consulta pública a los involucrados directos. Por ello en junio de 2014 se creó la “Mesa del mar” que sentó a isleños, científicos y autoridades políticas a conversar sobre la nueva área bajo protección que sumaría otros 570.000 km2 en la zona. La propuesta anunciada por la presidenta Bachelet será votada por los isleños en 2016, momento en que se definirá su creación o no. Si se llegan a materializar los tres parques, Chile tendrá protegido el 25,3% de su territorio marino, superando ampliamente al 12,6% de Reino Unido, al 15,5% de Estados Unidos y al 15,2% de Nueva Zelanda.

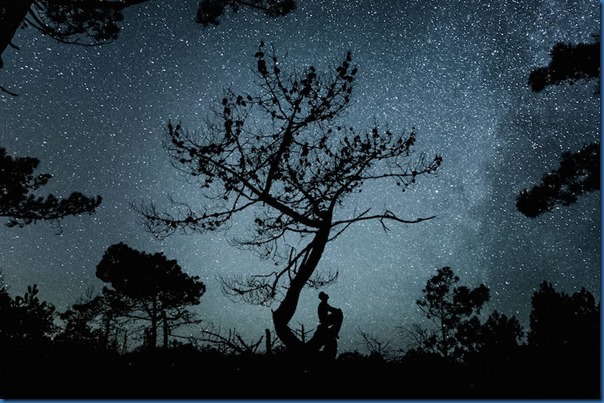

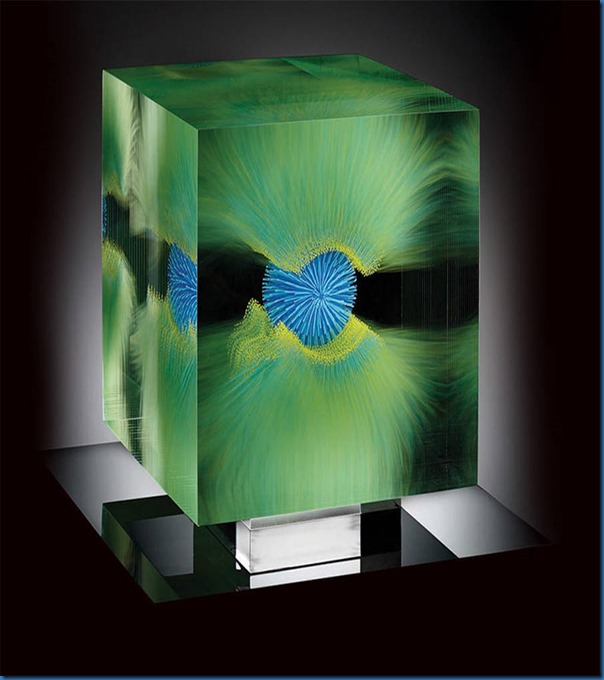

Los fondos profundos alrededor de San Félix y San Ambrosio, parte del parque marino Nazca-Desventuradas, se encuentran en un estado excepcional de conservación y no se observan señales de impacto humano. Foto de Enric Sala /National Geographic Proteger la biodiversidad Según datos recolectados por la Asociación para Estudios Interdisciplinarios de los Océanos Costeros (PISCO, en sus siglas en inglés), estudios realizados en 124 parques marinos alrededor del mundo señalan que tras establecida la protección en los océanos, en promedio, la biomasa se incrementa en 446% y su densidad en 166%, mientras que el tamaño de los animales aumenta 28% y la diversidad 21%. En tanto que cuando se trata de especies que fueron severamente explotadas, el incremento de la biomasa en algunos casos ha llegado al 1000%. Justo eso es lo que se busca lograr en Chile. Por ejemplo, el reporte realizado por PISCO y presentado en la “Mesa del Mar” señala que si se protege a la corvina, un pez presente en aguas chilenas, su capacidad de reproducción se disparará: mientras que un individuo de 50 centímetros de largo puede generar una descendencia de 6.000, uno de 70 centímetros logra dejar 43.000. Mas eso es solo parte de lo que se puede lograr. La diferencia entre proteger o no puede ser inmensa, especialmente cuando el nivel de endemismo de la zona es alto, algo que ocurre en el parque marino Nazca-Desventuradas. En 2013, Oceana y National Geographic se embarcaron en una campaña científica en la zona y encontraron allí un hotspot de peces de arrecifes donde el 72% de las especies observadas se conocen solo en el área. “Estos son los porcentajes de endemismo más altos jamás registrados en el mar”, dice el informe. “Además, es una zona endémica de alimentación y reproducción de juveniles del jurel. Luego de lo cual viajarían desde Juan Fernández hasta Nueva Zelanda”, agrega Liesbeth van der Meer, directora de Campañas de pesquerías de Oceana en Chile. Y si a ello se suma el que la zona es un corredor biológico para diversas especies, se vuelve un ejemplo claro de que el beneficio va más allá de las áreas protegidas mismas. Mucho por hacer “Es muy importante que se creen zonas protegidas para resguardar al biodiversidad”, dice Jane Lubchenco, académica de la Universidad Estatal de Oregon, Estados Unidos, y parte del grupo de trabajo que elaboró el informe para la creación del parque en Isla de Pascua. No obstante, el problema es que la mayoría de las aguas no cuentan con protección alguna. Si bien entre 1995 y 2005 se dio un gran salto al aumentar del 0,1% al 1,6% las áreas protegidas, esto no es suficiente, dice Jane Lubchenco. Así lo planteó, junto con Kirsten Grorud-Colvert, en un trabajo publicado en octubre en la revista Science donde aseguran que si la estimación de lo que se debe proteger concordara con los números que entrega la ciencia, el área estrictamente controlada debiera estar entre el 20 y 50%. Latinoamérica y el Caribe no escapa de esa realidad mundial. Los parques marinos en esta región abarcan menos del 0,1% de la zona económica exclusiva y la mayoría son pequeños –la mitad de ellos cubren menos de 7 km2–, según datos de PISCO. De 255 parques marinos, tan solo doce de ellos son patrullados periódicamente para prevenir la pesca ilegal. http://www.scientificamerican.com/espanol/noticias/chile-tendra-la-mayor-area-marina-protegida-de-latinoamerica/?WT.mc_id=SAES_ESPWKLY_20151104 |